From Dashboard to Co-pilot: Why Traditional Retail Intelligence Tools Fall Short in the Era of LLMs

TL;DR

Traditional Retail Intelligence struggles to convert data into fast, actionable decisions. LLMs change this paradigm by enabling natural language interaction, greater contextualisation, and proactive analysis. Solutions like flipflow’s Tyrell AI put this approach into practice, turning analysis into a co-pilot that guides real-time decisions.

Introduction: The BI Glass Ceiling

The retail sector has been investing millions in Business Intelligence platforms for years. Dashboards with dozens of KPIs, automated reports, stock alerts, sell-out comparisons by channel… All of this exists, works, and has value. But there comes a point where the teams working with these tools hit an invisible wall: the data is there, but transforming it into fast, contextualised, and actionable decisions remains slow, manual, and dependent on technical profiles.

This glass ceiling is not a problem of data quantity. Many companies have more data than they can process. The problem lies in how those tools are designed to interact with information, and for whom.

Large Language Models (LLMs) are cracking that ceiling. However, before discussing what is changing, it is worth understanding exactly what Retail Intelligence is today and where it fails.

What we understand by Retail Intelligence today

Retail Intelligence encompasses the set of tools, processes, and methodologies that allow manufacturers, distributors, and retailers to collect, process, and analyse market data to understand on-shelf performance, customer behaviour, and market dynamics, with the aim of translating that data into concrete decisions. It includes both physical and digital channels, connecting metrics for traffic, conversion, prices, promotions, and availability with business results.

In practice, a Retail Intelligence system relies on four pillars:

- Data collection from POS (Point of Sale), e-commerce, marketplaces, in-store sensors, and footfall counters.

- Integration of internal data (sales, inventory) with external signals (competitor prices, reviews, promotions, panels).

- Analytics and visualisation through dashboards, periodic reports, and automated alerts.

- Decision support, connecting insights with actions such as assortment changes, price adjustments, or modifications to planograms.

The objective is clear: to transform data into business visibility. The problem arises when that visibility does not turn into action quickly enough.

Traditional Retail Intelligence excels in the descriptive part. It answers questions like “what was sold”, “where did turnover drop”, or “which promotion worked best”. What it finds more difficult is connecting causes, context, and next steps. This gap is much more significant in an omnichannel environment, where the volume of information is growing and relevant signals are scattered across structured and unstructured sources.

Where Traditional Retail Intelligence Falls Short

Traditional Retail Intelligence solutions have provided a lot of value, but they were designed for a less volatile environment, with fewer data sources and less need for real-time response. This legacy creates friction when they are required to operate at the pace of modern retail.

It looks at the past more than the present

Classic BI tools are designed to analyse closed results: the previous week, monthly closing, or quarterly evolution. This approach provides visibility but arrives late in markets that change daily.

In high-turnover categories or those with strong promotional pressure — such as household cleaning, fresh food, or drinks — this delay can mean losing position to more agile competitors.

According to Salesforce’s Shopping Index, consumers are increasingly dynamic, with purchase journeys that combine channels, devices, and times of day. In this context, exclusively retrospective analysis limits the ability to react: by the time the problem is detected, part of the opportunity has already been lost.

Even so, real-time or near-real-time analysis remains the exception in much of the FMCG (Fast-Moving Consumer Goods) sector.

Data silos and low granularity

Consumer panels measure households, not individual transactions. Distributor till data has product and store granularity, but it is not always shared systematically. Data from the sales force captures what field reps report during their visits, with all the variability that implies. And e-commerce data operates with a completely different logic.

The result is a fragmented ecosystem where the same KPI, for example, the market share of an SKU in a supermarket, can vary significantly depending on the source consulted. Reconciling these differences consumes analytical time that could be dedicated to generating value.

Furthermore, levels of disaggregation do not always allow for the analysis the business needs: understanding what is happening with a specific reference in a specific chain and region usually requires customisation, as many providers only offer data aggregated by category or retailer. This lack of granularity limits analysis at the SKU, store, format, or attribute level, making it difficult to identify which variants are gaining traction or which points of sale are consistently experiencing stockouts.

Insights don’t scale well

In many organisations, access to insights depends on a few profiles: analysts, BI teams, or data specialists. They build queries, prepare reports, and answer business questions. This process works reasonably well when there are few markets, few categories, and few interlocutors.

The problem arises when the entire company wants fast answers. The category manager needs to understand a margin deviation. Trade marketing wants to compare promotions across regions. Operations needs to detect an anomaly in availability. If every new question requires a ticket, an extraction, and manual validation, the insight scales poorly.

Insight teams become bottlenecks. Requests pile up. And decisions are made with incomplete or outdated information.

Fragmentation of sources

Current retail generates useful information in many formats and channels:

- Transactional data

- Store images and audits

- Product reviews

- Support tickets

- Customer conversations

- Internal reports

- Competitor price monitoring

A medium-sized FMCG organisation might work simultaneously with consumer panel data, till data from one or several distributors, its own CRM, a point-of-sale execution tool, e-commerce platforms with their own analytics, social media data, and digital shelf analytics tools.

Each of these sources has its own structure, its own cadence, its own access logic, and its own management team. Very few organisations have managed to integrate them coherently into a unified analytical layer. Most work with each source separately, crossing data manually when necessary.

This fragmentation generates inconsistencies, interpretation errors, and a partial view of market reality.

Too much reporting, too little action

The focus on producing reports and dashboards often leads to a paradox: an abundance of charts but a scarcity of decisions. Many dashboards are limited to showing indicators without translating them into operational recommendations, forcing the user to interpret for themselves what to do about margin variations or drops in penetration.

This gap between insight and execution widens when information is not connected to action systems — such as pricing, promotions, or inventory planning — and when the format itself (tables, charts, comparisons) requires additional cognitive effort for interpretation and prioritisation. The result is a BI that has optimised report generation but not the activation of changes: data is consumed, but rarely converted into decisions.

Quality, matching, and maintenance issues

Beyond analytical limitations, there is a constant operational problem: data quality. Product coding errors, nomenclature changes between distributors, matching problems between own references and those at the point of sale, duplicate or missing data… All of this generates continuous maintenance that consumes resources and erodes trust in the analysis.

In categories with wide portfolios or high reference turnover, this problem is especially acute. Product master tables become outdated. Matching algorithms fail with new launches. And the data team spends part of its time cleaning instead of analysing.

Every new source requires rules, mappings, and ongoing maintenance. When the base data is flawed, the dashboard gains in aesthetics but loses in reliability.

What Changes with the Arrival of LLMs

Large-scale language models are not simply an incremental improvement over existing tools. They represent a shift in the layer of interaction with data, in contextualisation capability, and in the speed with which an organisation can move from data to action.

These models allow multiple structured and unstructured sources to be connected, translated into natural language, and generate responses and recommendations closer to how commercial, marketing, or operations teams think. This reduces adoption friction and opens up the use of data intelligence to profiles that previously stayed on the surface of the dashboard.

1. Natural language interaction with data

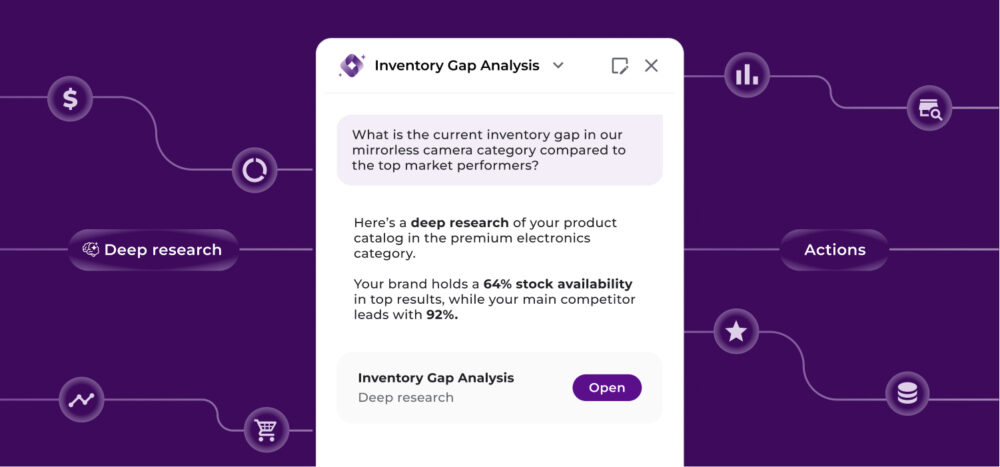

The first and most obvious transformation is the interface. With an LLM integrated over the correct data sources, a KAM (Key Account Manager) can ask: “In which chains has the sell-out of our main reference dropped the most in the last four weeks, and how does it compare to the market?” and receive a structured response without needing to open a dashboard, apply filters, or wait for the insights team to build the analysis.

This democratises access to information. Profiles that today depend on analytical intermediaries (commercial directors, brand managers, key account managers) can directly access the intelligence they need for their meetings, negotiations, or portfolio decisions.

Natural language reduces the distance between business and data. The user does not need to know how the query is built or master a complex tool to start exploring. With a good access and control architecture, data becomes more accessible to non-technical profiles.

2. More context and explanatory capability

LLMs provide something particularly valuable: the ability to read and summarise large volumes of unstructured information. They can analyse reviews, incidents, chats, emails, store reports, and internal documentation to detect recurring themes or explain trends.

A well-configured LLM on retail data can go beyond describing what happened. It can contextualise: “This drop in share coincides with the launch of a promotion by the main competitor in the same period and a stockout in two of the five chains analysed.”

This explanatory capability is very difficult to replicate with traditional BI tools, which present data but do not interpret it. The human analyst does this work of connecting contexts, but can only do it for a fraction of the questions the business needs to answer.

LLMs can simultaneously process multiple signals and generate a coherent narrative that helps the decision-maker understand not just what happened, but why.

3. More agile operations and proactive decisions

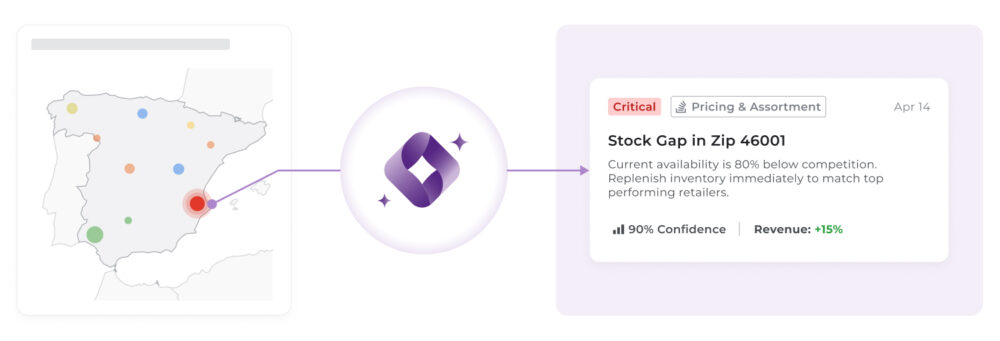

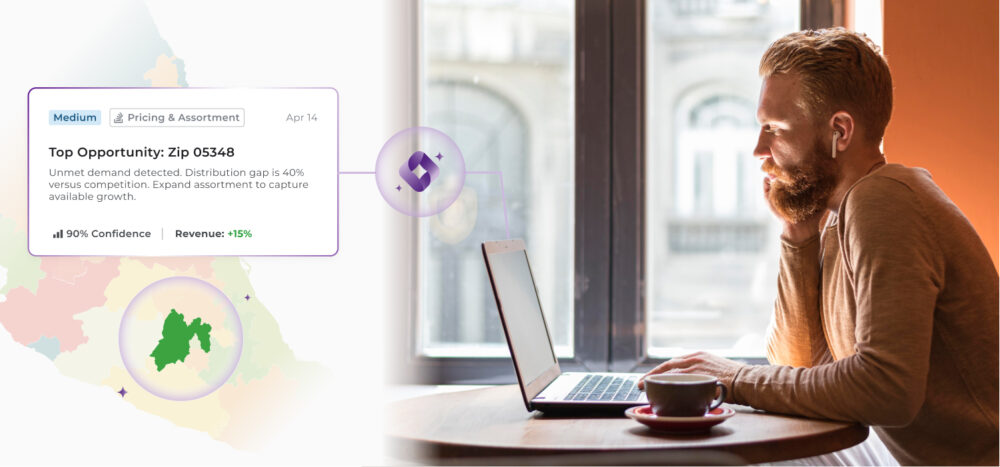

Another relevant dimension is the shift from reactive to proactive analytics. Traditional systems answer questions that someone asks. LLM-based systems can be configured to detect anomalies, identify opportunities, and generate alerts before the user even asks the question.

A Retail Intelligence system augmented with LLMs can warn: “Reference X in chain Y has had a loss of facing below the agreed standard for three weeks. Historically, this precedes a drop in sell-out of between 8% and 12% in the following four weeks.” This turns the system into a commercial radar, not just a rearview mirror.

4. From dashboard to decision co-pilot

The sum of these capabilities (natural language, contextualisation, proactivity) forms a new type of tool: the decision co-pilot. Instead of opening a dashboard and manually extracting conclusions, the user has a conversation with the system. They pose hypotheses, receive analysis, explore alternatives, and reach a recommended action with much less cognitive effort.

This shift has significant organisational implications. It reduces dependency on analytics teams for standard reporting tasks, frees up time for higher-value work, and accelerates decision cycles throughout the commercial organisation. This dynamic brings analysis closer to the actual moment of decision.

What LLMs Don’t Solve on Their Own

It is important not to overstate expectations. LLMs are powerful, but they have relevant limitations in the context of Retail Intelligence.

- Data quality remains decisive: An LLM working on poorly structured data, with matching errors or outdated sources, will generate incorrect answers with more fluency and conviction than a traditional tool. Garbage in, garbage out applies more strongly, not less.

- Source integration remains a technical challenge: For an LLM to be able to answer about sell-out, prices, in-store execution, and competitor data coherently, someone must have built the data architecture that makes it possible. This requires investment, time, and technical skills that are not within reach of all organisations.

- Hallucination is a real risk: LLMs can generate plausible but incorrect answers, especially when asked about very specific data or when context information is incomplete. Any implementation of this type of system in commercial decision environments needs governance, verification, and human supervision mechanisms.

- Domain knowledge is still necessary. An LLM does not intrinsically know what a drop in numeric distribution in a specific channel means for a specific category. This context has to be built, whether through well-designed prompts, fine-tuning, or the integration of expert knowledge into the system architecture.

It’s Not the End of Retail Intelligence: It’s Its Evolution

The problem has never been the data, but the distance between the data and the decision. Throughout this article, we have seen how traditional Retail Intelligence falls short not due to a lack of analytical capabilities, but due to structural frictions: a retrospective focus, fragmentation of sources, lack of granularity, and an excessive dependency on dashboards that inform but do not guide action. LLMs do not replace this ecosystem; they reconfigure it, bringing analysis closer to the real moment of decision and reducing the effort needed to turn information into impact.

In this context, flipflow, through our co-pilot and proactive decision-making system Tyrell AI, offers an integrated approach. We consolidate retail data such as on-shelf performance, price dynamics, distribution coverage, and consumer perception into a single layer of intelligence, allowing interaction through natural language, crossing scattered signals, and generating precise and actionable recommendations. Tyrell AI accelerates the process of obtaining answers and also anticipates problems, prioritises opportunities, and directly links analysis with strategic commercial decisions.

On the other hand, we do not eliminate the need for a good data architecture — we make it more critical — but we do redefine who can extract value from it and at what speed. Tyrell AI democratises access to insights without losing analytical depth, reduces dependency on manual workflows, and turns Retail Intelligence into an active system, more akin to a radar than a report.

The result is a paradigm shift: from the dashboard as a finishing point to the co-pilot as a starting point. Because in an environment where competitive advantage depends on reacting before the market, it’s not who has the most data that wins, but who converts it into decisions most effectively.