LLM Use Cases in Retail Intelligence: Practical Applications and Recommendations for Retail Teams

TL;DR

LLMs allow for the conversion of data and text into actionable insights within Retail Intelligence. Their real value emerges when integrated with reliable data and decision-oriented processes.

Retail Intelligence Today

Retail Intelligence has matured as a discipline. Today, retail data teams handle increasing volumes of structured information (sales, stock turnover, penetration, prices) but also considerable amounts of unstructured information: customer reviews, field reports, supplier communications, social media data, and competitive signals scattered across multiple sources.

The challenge is no longer data availability. Currently, the challenge is to turn that data into concrete decisions, on time, and with sufficient context for business teams to act with confidence.

In this context, LLMs (Large Language Models) have shifted from being a technological curiosity to an operational tool with clear applications. Their ability to process text, reason about context, and generate structured responses makes them a natural complement to Retail Intelligence platforms that already possess a solid data infrastructure.

This article describes the most promising use cases, the nuances to consider when implementing them, and practical recommendations for teams looking to move from proof of concept to generating real value.

Specific LLM Use Cases in Retail Intelligence

1. Automated analysis of customer feedback

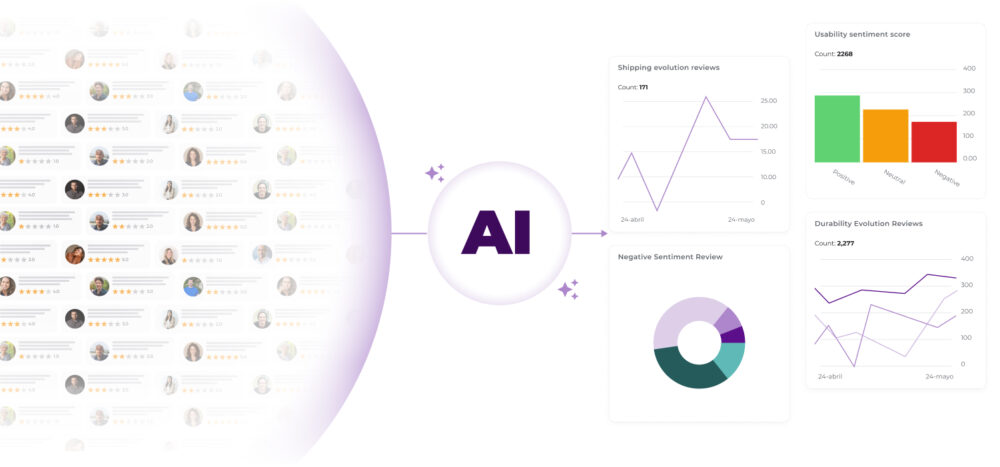

One of the clearest uses of LLMs in retail is the analysis of the voice of the customer. Product reviews, satisfaction survey responses, point-of-sale comments, and social media mentions represent a source of qualitative information that retail teams rarely exploit systematically. The volume is too high for manual analysis, and traditional sentiment analysis solutions offer insufficient granularity.

LLMs allow for the extraction of specific attributes from feedback: which product aspects generate the most satisfaction, which complaints repeat most frequently, and how the perception of a category evolves after a range change or promotional action. Beyond generic sentiment analysis, a well-configured model can classify comments by product attribute (price, quality, availability, shopping experience) and link them to specific commercial moments.

For example, a retailer can ask the system to summarise the main causes for returns in a category, identify recurring complaints about sizing or quality, or group negative reviews by theme. It is also very useful for prioritising actions. If the system detects that a group of products is accumulating comments about damaged packaging, delivery times, or lack of consistency in the description, the team can intervene sooner and coordinate with operations, logistics, or catalogue teams.

From a Retail Intelligence perspective, this use case holds special value because it adds context to commercial data. A drop in sales or an increase in returns is no longer seen as an isolated number but becomes connected to the actual customer experience.

2. Richer competitive monitoring

Competitive intelligence in retail has historically depended on monitoring prices and shelf presence. LLMs expand the scope of this monitoring by allowing the processing of textual sources that previously fell under the radar: e-commerce product sheets, analyst notes, changes in competitors’ sales pitches, or social media movements.

Some practical examples:

- Identify which competitors have expanded their range in a specific category

- Summarise price changes and promotions by brand or format

- Detect new value propositions in product sheets

- Compare commercial messages in campaigns or landing pages

- Highlight movements that coincide with changes in own demand

This use is particularly valuable for pricing, category management, and trade marketing. In markets with high promotional turnover, the speed of competitive reading makes clear differences.

The advantage lies not in the raw speed of data capture, but in the ability to provide context and relevance to information that would otherwise be lost in the noise.

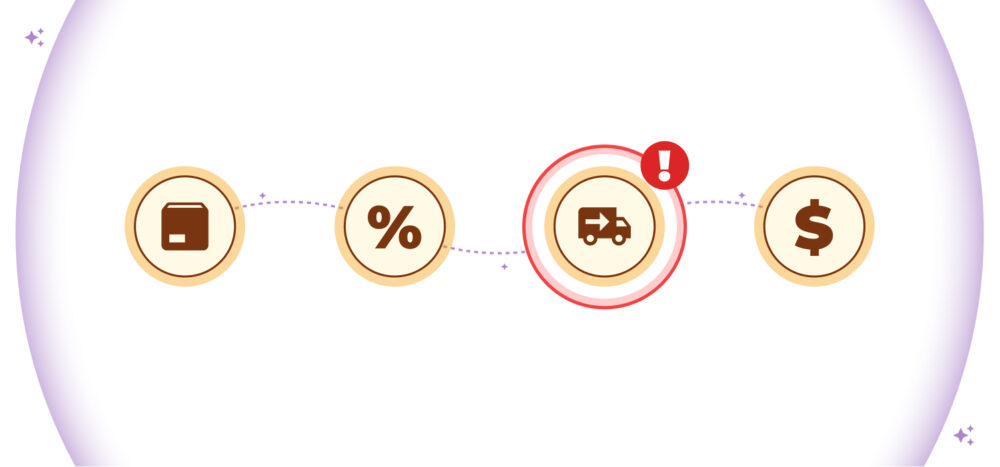

3. Explaining commercial anomalies

Detecting an anomaly in sales data is relatively simple with standard statistical tools. Explaining it is much harder. When a category drops by 12% in a specific week, the analyst needs to cross-reference multiple sources (checkout data, delivery information, logistical incidents, promotional activity) to build a coherent hypothesis. This process can take hours.

LLMs, integrated into the data flow, can automate an initial diagnostic layer: which variables seem most related to the anomaly, which stores concentrate the problem, or what changes occurred just before the deviation. From there, they can generate an explanatory hypothesis that the analyst can validate or discard in minutes instead of hours.

The goal is not to replace the analyst’s judgement, but to reduce the time needed to reach a reasonable hypothesis. This frees up capacity for second-order analysis: understanding why something is happening, not just what has happened.

4. Support for category managers and trade marketing teams

Category managers and trade marketing teams handle a particularly demanding mix of information. They need to understand turnover, margin, promotional elasticity, store execution, channel performance, competitor actions, and consumer response. Doing all this with agility is not always easy.

An LLM-based system can serve as a support layer for very specific tasks, for example:

- Generate weekly summaries by category

- Compare performance between regions or store clusters

- Detect SKUs at risk of stockouts or margin erosion

- Synthesise promotional execution and its results

- Explain relevant deviations with a clear narrative

- Prepare executive reports for commercial meetings

This type of support has two practical effects. On one hand, it reduces the time spent on repetitive data collection and summarisation tasks. On the other, it improves the ability to arrive at meetings with a more structured reading of the business.

McKinsey highlights that generative AI has clear potential in synthesis, documentation, and knowledge support tasks. In retail, this promise fits very well with the needs of category management and trade marketing, where information is spread across many sources and the demand for a response is constant.

5. Internal assistants for retail teams

Perhaps the use case with the highest short-term adoption is that of internal conversational assistants geared towards data queries. Instead of depending on an analyst or knowing how to write a SQL query, a store manager, sales technician, or area manager can ask questions in natural language about the performance of their area and receive an immediate response with the relevant data and necessary context.

“What is the evolution of the snacks category in the impulse channel this quarter compared to the same period last year?” is the type of question that currently requires technical mediation. With a well-built assistant on reliable data, the answer should be available in seconds for anyone on the team.

The effect on the organisation is significant: democratisation of data access, reduction of bottlenecks in analysis teams, and greater speed in operational decision-making.

Recommendations for Retail Teams

Adopting LLMs within Retail Intelligence can provide a lot of value, but it should be done with focus and method. Here are some practical recommendations for getting off to a good start:

Prioritise use cases with visible impact

The temptation in AI projects is to start with what is technically interesting. The recommendation is to start with what hurts. Identify the most frequent frictions in the workflow of analysis, category management, or trade teams: processes that consume disproportionate time, decisions delayed by a lack of information synthesis, reports that no one reads because they arrive late. That is where LLMs generate quickly visible value.

Review data quality before scaling

LLMs cannot recognise if data is poorly structured, incomplete, or inconsistent. A model working on outdated product information or on sales data with gaps will produce responses that seem coherent but lead to erroneous conclusions. Before deploying any application in production, it is worth auditing the quality of the data sources that will feed the system.

Combine structured and unstructured data

An important part of the differential value of LLMs lies in joining both worlds. If the system only queries sales and stock, the jump compared to a traditional tool may be limited. If it also incorporates reviews, receipts, store reports, or operational documentation, the quality of the insight improves significantly.

Design experiences intended for end users

A technically correct application that business users do not adopt generates no value. The interface design, the level of detail in responses, the type of questions the system can and cannot answer: everything must be calibrated for the end-user profile, not for the technical team building it. The more natural the interaction, the higher the adoption by non-technical profiles.

Maintain human supervision and clear controls

LLM responses can contain errors, especially when working with data they do not know well or when asked to infer beyond what the context allows. In Retail Intelligence applications, where decisions have direct commercial consequences, it is essential to maintain validation controls: indicate data sources, make uncertainty explicit when it exists, and establish human review processes for outputs that feed high-impact decisions.

Measure productivity and decision speed

To evaluate success, it is not enough to measure the use of the tool. It is also advisable to track indicators such as:

- Time saved in report preparation

- Speed in answering business questions

- Number of queries resolved without manual intervention

- Adoption by commercial teams

- Improvement in analysis and reaction times

Establish a baseline before implementing and measure the results once you start using your Retail Intelligence tool connected to LLMs. AI initiatives work best when linked to specific operational results.

Start small, scale with discretion

A well-defined pilot (for a specific category, team, or use case) generates learnings that a broad rollout blurs. It allows for detecting failures before they have an organisational impact, validating the value proposition with real users, and building the internal case for scaling with credibility.

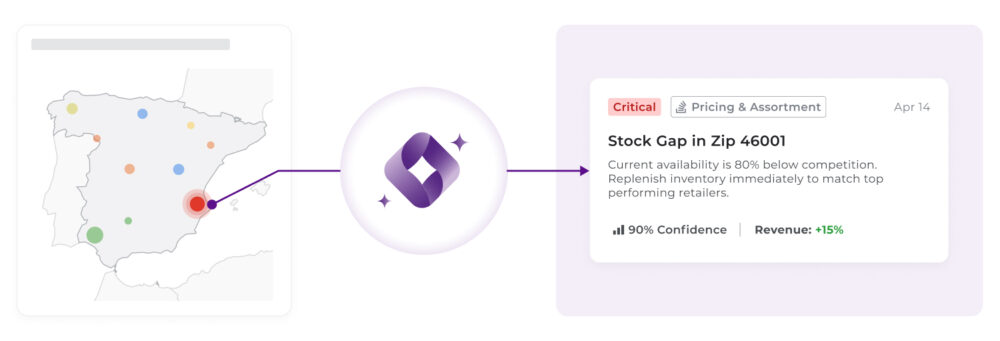

Conclusion: From Experimentation to Real Value

Ultimately, the real leap is not in adding LLMs as an additional layer, but in integrating them into a Retail Intelligence architecture capable of connecting data, context, and decisions within the same operational flow. When this integration is solid, technology stops being perceived as an isolated tool and becomes part of the teams’ daily routine, directly influencing how they prioritise, analyse, and act.

This is where an approach like that of flipflow makes sense. Beyond centralising information, the differential value lies in structuring the data so that it can be activated: combining internal and external sources, offering a unified view of the market, and facilitating the translation of insights into actionable decisions without friction.

In this scenario, LLMs do not replace Retail Intelligence platforms; they amplify their impact. They allow for more natural interaction with information, reduce the distance between question and response, and accelerate the critical step between detecting an opportunity and executing an action. But this capacity only fully materialises when supported by a robust, well-modelled, and business-oriented database.

The key is to build a system where data, analytics, and generative capabilities work in a coordinated manner, aligned with business needs. When this fit is real, teams not only understand better what is happening but can also act accordingly with much more fluidity and judgement. This is where intelligence stops being analysis and becomes action.